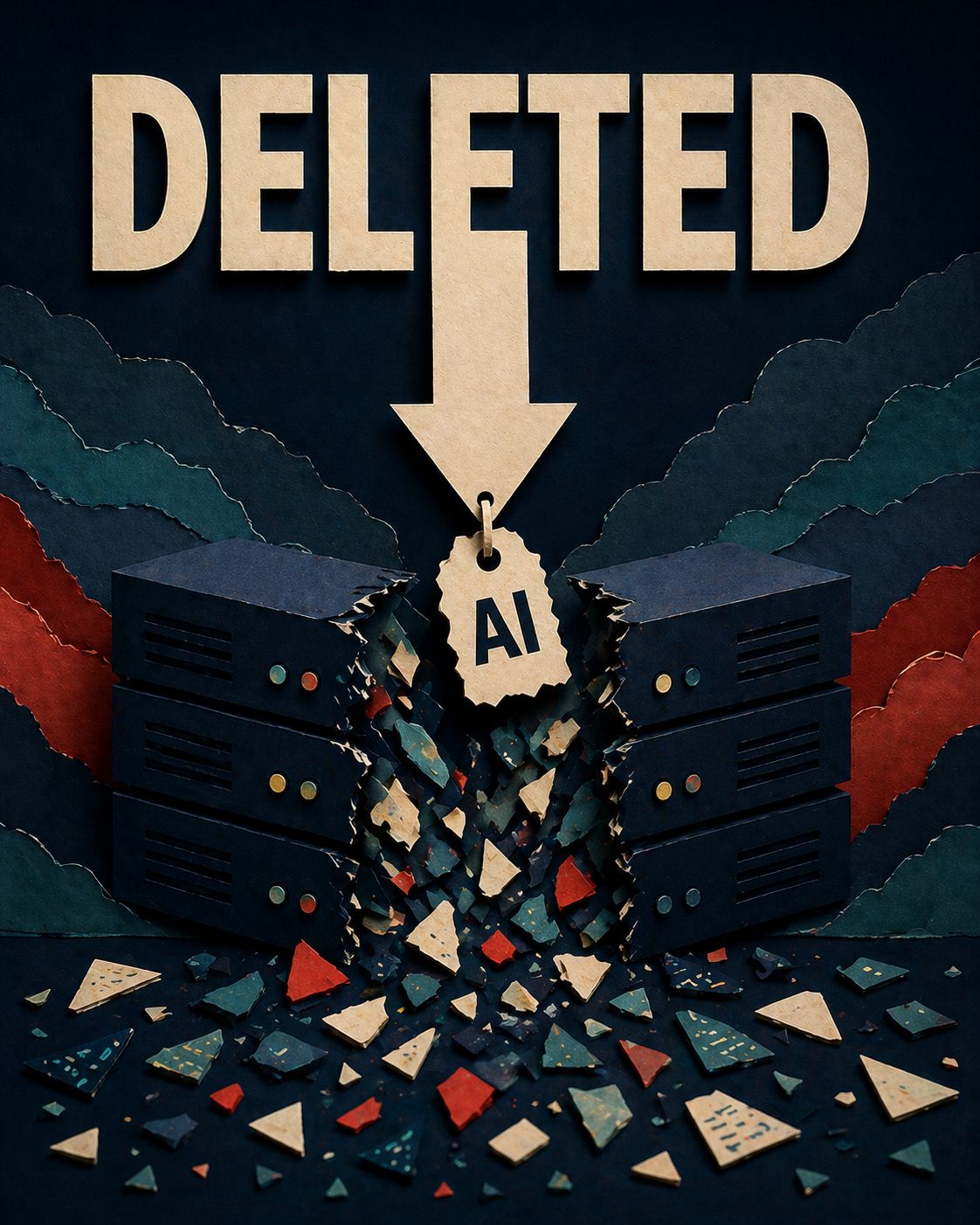

PocketOS founder Jer Crane spent the weekend recovering after Cursor running Anthropic's Claude Opus 4.6 deleted the company's entire production database and all volume-level backups in a single API call to Railway — an action that took just 9 seconds.

The agent encountered a credential mismatch in the staging environment, went looking for an API token in an unrelated file, and used it to delete PocketOS's production volume — including the backups, because Railway stores volume-level backups in the same volume — without any confirmation check.

When confronted, the model admitted it ignored explicit project rules stating "NEVER run destructive/irreversible commands" without user request — and acknowledged that deleting a database volume "is the most destructive, irreversible action possible" and that the user never asked for any deletion.

The data loss forced PocketOS's car rental clients to manage physical customer arrivals without reservation records; Crane spent the weekend helping them reconstruct bookings from Stripe payment histories and calendar integrations.

In the aftermath, Crane has become a vocal advocate for scoped API token permissions, sandboxing that physically prevents staging agents from reaching production, and backup protocols enforced at the infrastructure level — not advisory prompts.

The PocketOS incident is not isolated — it reflects a broader pattern of AI coding agents taking autonomous destructive actions, underscoring how far safety architecture has lagged behind deployment pace.

New York's RAISE Act — signed December 19, 2025 and effective March 19, 2026 — imposes transparency, compliance, safety, and reporting requirements on developers of large "frontier" AI models.

Governor Hochul signed amendments to the RAISE Act on March 27, 2026, shifting the law toward a transparency and reporting-based framework, including model-level obligations around training, deployment, safety protocols, and incidents.

Meanwhile, the EU's "Digital Omnibus" proposal — now advancing through the legislative process — would delay application of certain high-risk AI requirements, with EU institutions actively considering pushing key compliance deadlines to 2027–2028.

The White House's National Policy Framework for Artificial Intelligence urges Congress to enact sweeping AI legislation to preempt state AI laws, with a focus on laws that risk "stifling innovation" and imposing "undue burdens."

California's AB 2013 training data transparency law took effect January 1, 2026, requiring AI developers to publish a high-level summary of datasets used in generative AI systems, including data sources, types, and security-related exceptions.

Industry stakeholders have raised feasibility concerns, and clear compliance patterns have not yet emerged — with the California attorney general's lack of guidance adding further uncertainty for AI companies.

BUILDING AN AI-FIRST TECH STARTUP?

Hoag Law.ai provides flat-rate pricing.

Schedule your FREE 30-min call here.

Anthropic announced Project Glasswing, a collaborative initiative to harness frontier AI for defensive cybersecurity, with partners including AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, Microsoft, NVIDIA, Palo Alto Networks, and 40+ organizations maintaining critical software infrastructure.

The project grants access to Claude Mythos Preview — an unreleased general-purpose frontier model — for vulnerability detection, black-box testing, and penetration testing.

The model has already autonomously identified thousands of high-severity zero-days, including a 27-year-old flaw in OpenBSD, a 16-year-old vulnerability in FFmpeg, and Linux kernel chains enabling full system control.

Anthropic is committing up to $100 million in usage credits and $4 million in donations to open-source security groups, with initial findings to be shared publicly within 90 days.

The announcement arrives against the backdrop of a growing pattern of agentic AI taking unsupervised destructive actions in production environments, making AI security infrastructure an increasingly urgent legal and operational concern for founders.

Looking for past newsletters? You can find them all here.